Listener over Infiniband on Exadata (part 1)

Nice topic, right? Beautiful thing to work with when you have Oracle Engineered Systems. So, why would you configure a listener over the InfiniBand Network? How does that make sense in your environment and how would you leverage that?

The solution proposed was to offload the data traffic from the Client Access network to the Private InfiniBand network. Since database servers also talk to each other using the InfiniBand Private network, we can use it for some cases and leverage that when it makes sense. You do not want to do that if your environment is starving for I/O and is already consuming almost all the IB network bandwidth. Ok, so configuring the log shipping through the IB network will make the client network breathe again.

The solution proposed was to offload the data traffic from the Client Access network to the Private InfiniBand network. Since database servers also talk to each other using the InfiniBand Private network, we can use it for some cases and leverage that when it makes sense. You do not want to do that if your environment is starving for I/O and is already consuming almost all the IB network bandwidth. Ok, so configuring the log shipping through the IB network will make the client network breathe again.

Background

First of all, it is important to understand that InfiniBand (IB) is a computer-networking communications standard used in high-performance computing that features very high throughput and very low latency. It is used for data interconnect both among and within computers. InfiniBand is also used as either a direct or switched interconnect between servers and storage systems, as well as an interconnect between storage systems. ( Wikipedia) Furthermore, we are going to enable the Sockets Direct Protocol (SDP) in the servers. Sockets Direct Protocol (SDP) is a standard communication protocol for clustered server environments, providing an interface between the network interface card and the application. By using SDP, applications place most of the messaging burden upon the network interface card, which frees the CPU for other tasks. As a result, SDP decreases network latency and CPU utilization, and thereby improves performance. ( Oracle Docs) Imagine you have an Exadata full rack. This means you have 14 storage servers (aka storage cells or cells), eight compute nodes (aka database servers or database nodes) and two (or three) InfiniBand Switches or, even better, you might have an Exadata with elastic configuration. Therefore, the number of compute nodes can be 18 and only three storage servers because you might need more compute power than storage capacity. The procedure and the case presented here can also be applied to SuperCluster, Exalytics, BDA or any other Oracle Engineered System (except ODA) you might have in your infrastructure.Contextualization

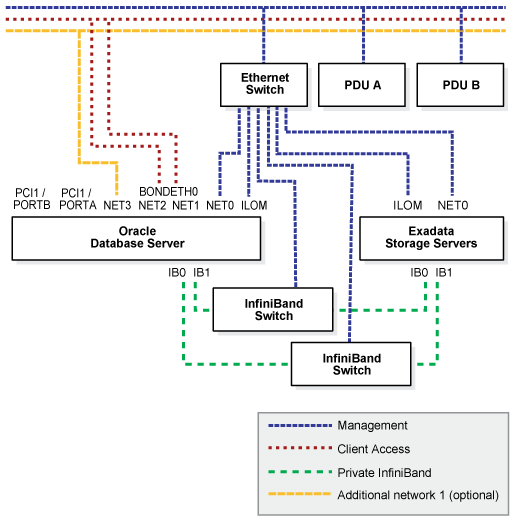

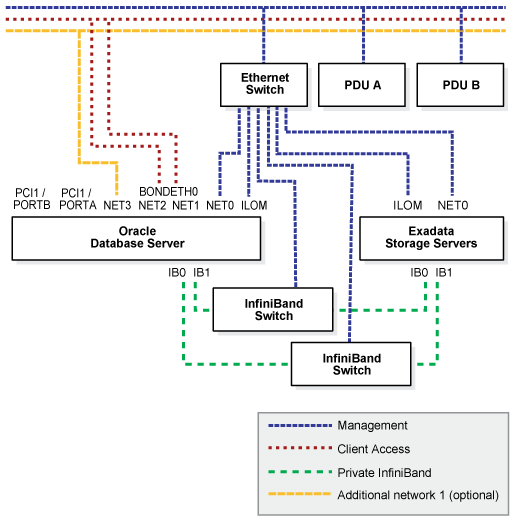

Now that we have some background, let's give you some context. You have an application running on one of your compute nodes, let's say exadb01, that consumes a lot of data from your database that runs on exadb02 and exadb04. This application can be a Golden Gate (GG) instance on a downstream architecture, for example. When a database is accessing data stored in the storage servers, it uses the IB network. However, when some other application such as downstream Golden Gate receive some data from the database, the data is transferred via the public (10Gbps) network. This can cause a huge overhead on the network depending on the volume of data that the downstream GG is receiving. There is a way to configure the infrastructure to support the data transfer from the database to the downstream GG instance via the IB network. Please keep in mind that this works here only because the database and the GG instance are running on the same Exadata system. GG receives data from the database to replicate somewhere else. The data is sent by the database to the downstream GG instance using a log shipping method, meaning one of the log_archive_dest_n parameter is configured to ship logs to the downstream GG instance. The database we have to use in this approach generates ~21TB of redo data per day (~900GB per hour), so it is a huge amount of data to be transferred via 10Gbps network. 900GB/h is 2Gbps, or 20% of the bandwidth. This does not seem like a lot, but this network is shared with other databases and client applications. In the IB network, this would consume only 5% if the IB switches are bonded for failover, or 2.5% if they are bonded for throughput. Take a look at how the network is set in the Exadata to understand the solution: The solution proposed was to offload the data traffic from the Client Access network to the Private InfiniBand network. Since database servers also talk to each other using the InfiniBand Private network, we can use it for some cases and leverage that when it makes sense. You do not want to do that if your environment is starving for I/O and is already consuming almost all the IB network bandwidth. Ok, so configuring the log shipping through the IB network will make the client network breathe again.

The solution proposed was to offload the data traffic from the Client Access network to the Private InfiniBand network. Since database servers also talk to each other using the InfiniBand Private network, we can use it for some cases and leverage that when it makes sense. You do not want to do that if your environment is starving for I/O and is already consuming almost all the IB network bandwidth. Ok, so configuring the log shipping through the IB network will make the client network breathe again.