Prioritizing Good Ideas

Tags:

Technical Track,

Business Insights

In

our previous post we discussed the Lean Canvas (LC), a powerful tool for ensuring organizational alignment for targeted use cases. The LC provides us a foundation for value to be realized and the supporting rollout plan for a use case. We recommend building these for five to seven early use cases, and then prioritizing them for implementation based on their relative value.

When developing your data strategy, there will inevitably be a large number of good ideas that come from the various working sessions, documents, stakeholder interviews and outside consultants. These good ideas should be captured in the form of a use case with an associated Lean Canvas. This can often lead to a daunting situation where organizations do not know where to begin or which investments to prioritize. Prioritized work should always be managed as a function of the impact on the business. This does not mean that every team or business unit will get the same return or value, but it does mean the organization as a whole will see benefits quickly and regularly.

At this stage in the process we are focused on relative measures of value for prioritization, not absolute returns or financial commitments. We will discuss in later posts how to effectively predict and measure value. This stage is about answering the common questions about what work will provide foundational capabilities and early wins for our data strategy program.

This exercise is about how we best apply our limited resources – money, time, people and vendor support. Our goal is to balance the application of those resources with the use cases and investment areas that will have the broadest impact on the organization with minimal investment. This can often be a difficult set of tradeoffs for building new capabilities or tackling tech debt, which can slow implementation on necessary capabilities.

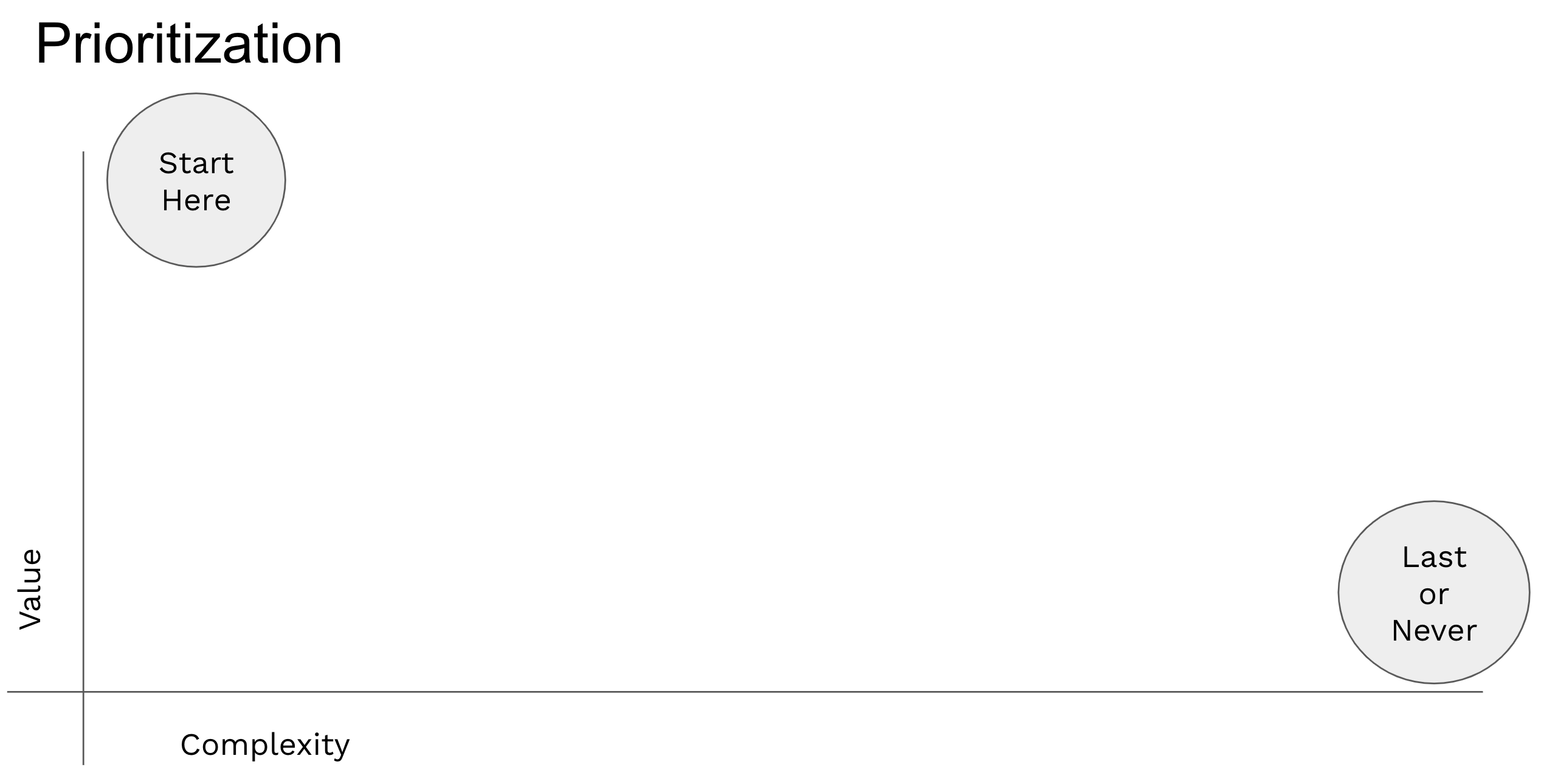

When prioritizing use cases for later sequencing and implementation, we leverage two dimensions:

-

Value – This axis is the relative measure of return for each use case compared to the others on the map. This axis can be a representation of revenue growth, operational savings or time. The most common approach is to color code each measure the organization agrees to so the change quickly reveals the value realized for a given use case.

-

Complexity – This axis is a measure of the difficulty to implement the use case. This is often as simple as resources for a time period, but can also include more complex measures including technical debt or dependencies that must be met to realize the value identified. Risk can have a play in this measure, but we recommend against it as risk at this stage can be highly subjective.

At this stage, both axes are relative, not absolute in their returns to the business. The goal at this stage is to prioritize our work, not plan out every detail or understand every risk. Traditional approaches for running transformation programs will assist with those planning aspects.

There is one more important dimension to sequencing work – the technology sequencing component. We purposefully do not include it in this first pass prioritization, but it is an important part of taking our relative priorities and ranking and turning them into actionable commitments. Once we have our relative priorities, our technology teams can evaluate them for technical debt, dependencies and necessary foundational work. This will cause some shifts in the priority that we build, but should not change the value provided to the business.

Often CIOs, CDOs and other executives will ask “how can we go faster?”. What they are really asking is how the teams can show progress sooner and more regularly for large, complex transformation projects. When challenged with this question, teams should think about two approaches to answer the question and provide comfort to executive teams on the investment and returns:

-

Decompose into smaller pieces – This approach is to take a single use case and break it down into smaller, incremental deliveries of value. While not always possible, this is an effective way to both showcase wins from the project and associated investments, while also getting earlier user feedback to correct in later phases. The idea of “fail fast” is an important cultural component to decomposing work and using the opportunity to both showcase value and gather feedback.

-

Building momentum to accelerate over time – The first use case for a new data strategy initiative is always the most difficult to deliver. The organization is maturing technology, people and process simultaneously which inevitably causes conflict and delay. This early effort can pay off later in the project through higher velocity delivery.

Ultimately, the question of going faster is really about educating leadership on the approach to deliver work incrementally and build trust that the team can iterate effectively and then, later, accelerate. I like the “build the team and split” approach to acceleration over time. By taking a single high performing team and splitting them into two teams and adding additional team members, an organization can gain the value of early learnings and cultural habits while doubling the number of projects that can be effectively managed.

Once our data strategy is created we will inevitably identify a large number of potential use cases that will benefit the organization. Our ability to quickly prioritize those on relative terms enables later planning and execution to happen in an organized and beneficial manner. Using relative measures of value and complexity allow us to rank our use cases and begin handing them to teams to complete detailed planning and initiate execution.

In our next post, we will explore how to define the necessary work-steams to execute our data strategy. While much of our discussion has been about prioritizing individual use cases, the grouping of those allow for focused development and strong organizational change management as we rollout new capabilities.